Filter By

59 Repositories

Python

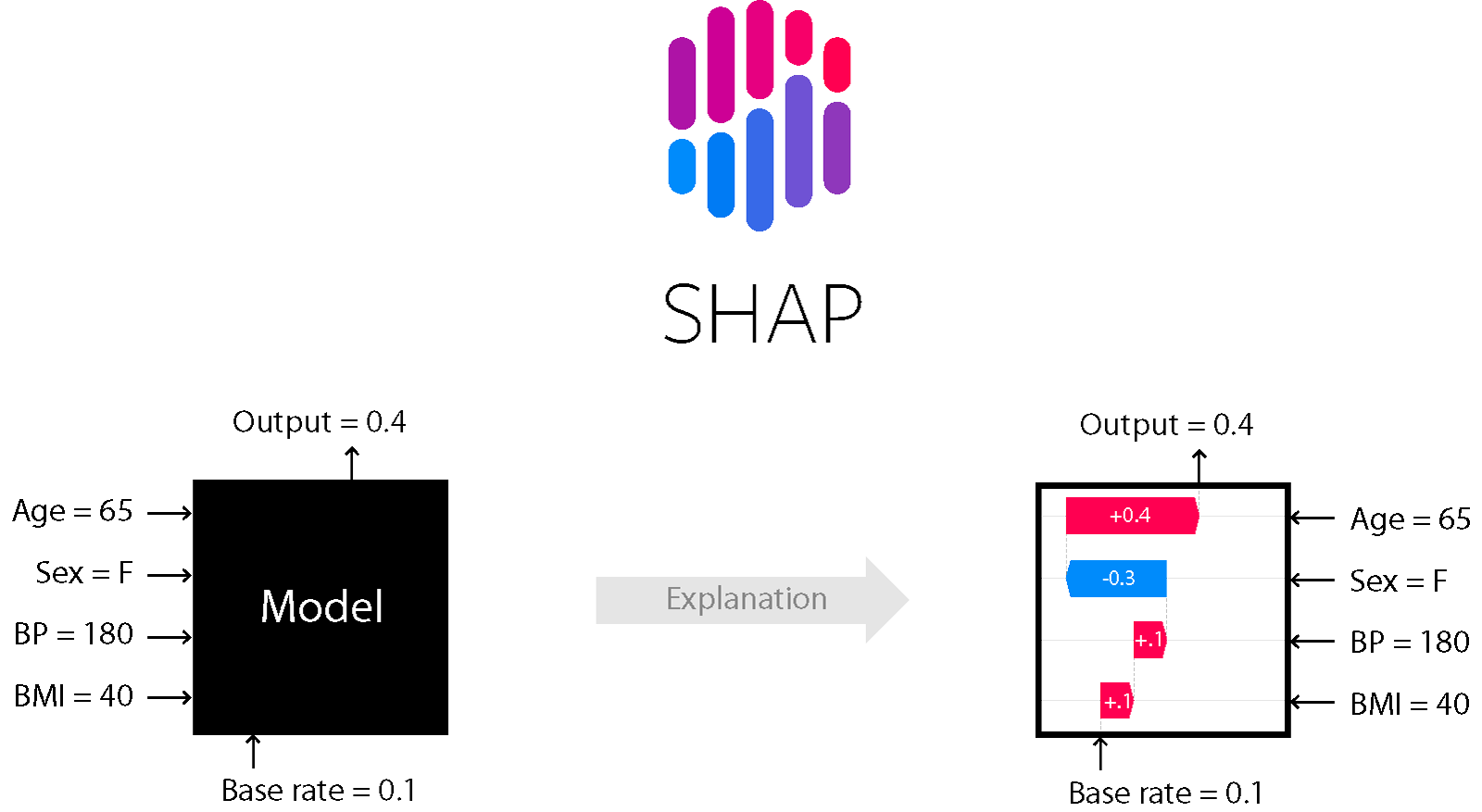

A game theoretic approach to explain the output of any machine learning model.

SHAP (SHapley Additive exPlanations) is a game theoretic approach to explain the output of any machine learning model. It connects optimal credit allo

A collection of infrastructure and tools for research in neural network interpretability.

Lucid Lucid is a collection of infrastructure and tools for research in neural network interpretability. We're not currently supporting tensorflow 2!

tensorboard for pytorch (and chainer, mxnet, numpy, ...)

tensorboardX Write TensorBoard events with simple function call. The current release (v2.1) is tested on anaconda3, with PyTorch 1.5.1 / torchvision 0

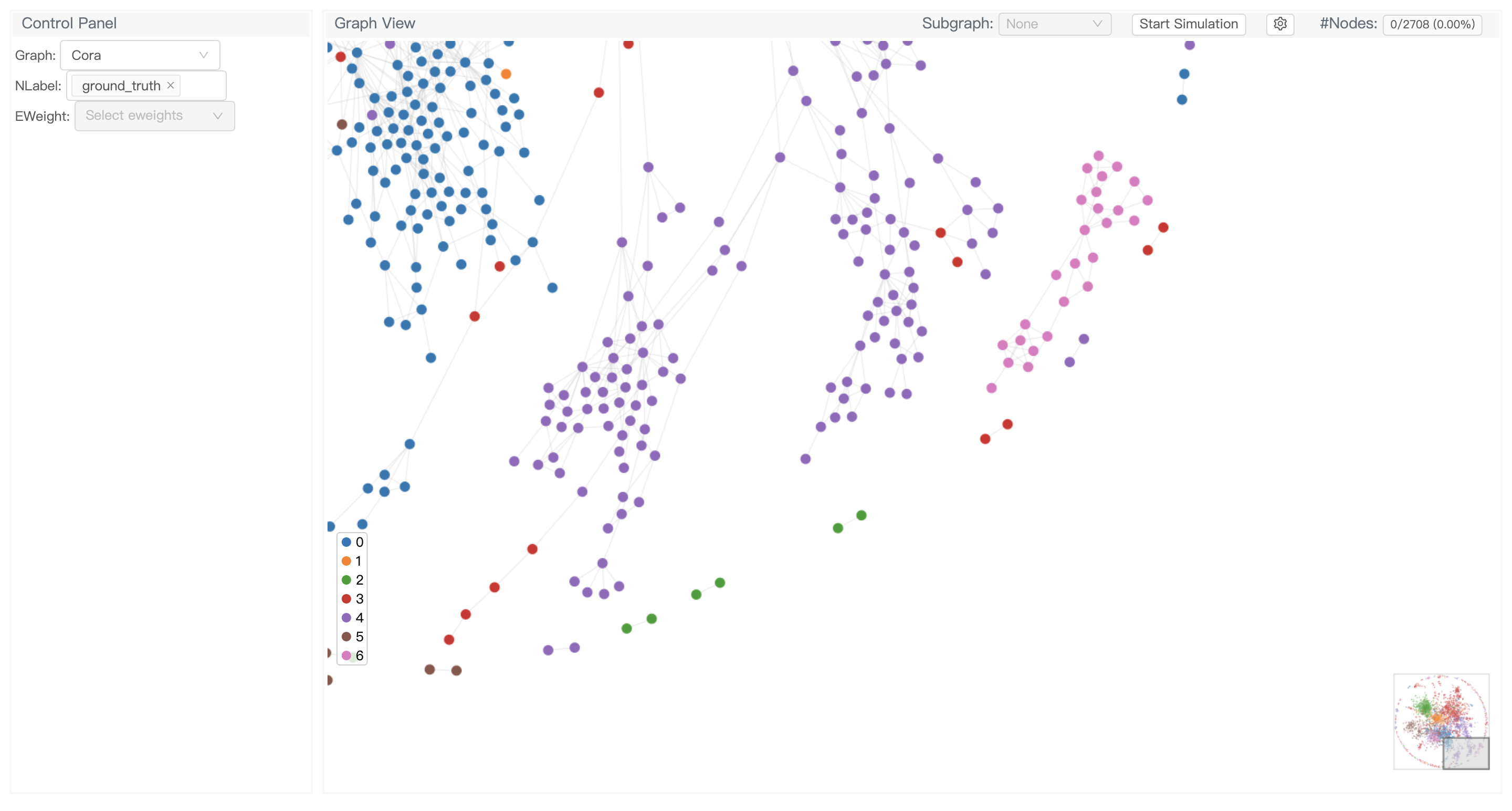

GNNLens2 is an interactive visualization tool for graph neural networks (GNN).

GNNLens2 is an interactive visualization tool for graph neural networks (GNN).

Bias and Fairness Audit Toolkit

The Bias and Fairness Audit Toolkit Aequitas is an open-source bias audit toolkit for data scientists, machine learning researchers, and policymakers

JittorVis - Visual understanding of deep learning model.

JittorVis - Visual understanding of deep learning model.

Code for "High-Precision Model-Agnostic Explanations" paper

Anchor This repository has code for the paper High-Precision Model-Agnostic Explanations. An anchor explanation is a rule that sufficiently “anchors”

Portal is the fastest way to load and visualize your deep neural networks on images and videos 🔮

Portal is the fastest way to load and visualize your deep neural networks on images and videos 🔮

TensorFlowTTS: Real-Time State-of-the-art Speech Synthesis for Tensorflow 2 (supported including English, Korean, Chinese, German and Easy to adapt for other languages)

🤪 TensorFlowTTS provides real-time state-of-the-art speech synthesis architectures such as Tacotron-2, Melgan, Multiband-Melgan, FastSpeech, FastSpeech2 based-on TensorFlow 2. With Tensorflow 2, we c

Visualization Toolbox for Long Short Term Memory networks (LSTMs)

Visualization Toolbox for Long Short Term Memory networks (LSTMs)

Pytorch implementation of convolutional neural network visualization techniques

Convolutional Neural Network Visualizations This repository contains a number of convolutional neural network visualization techniques implemented in

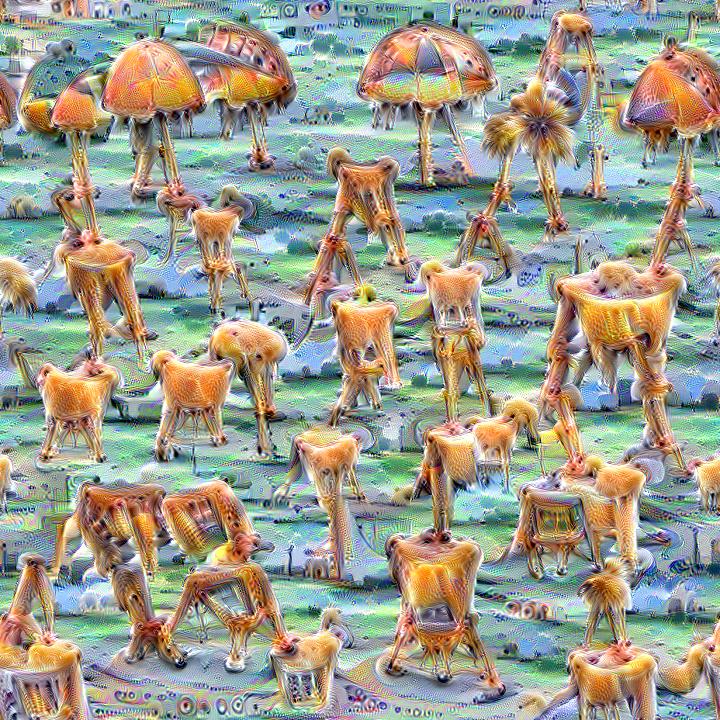

Quickly and easily create / train a custom DeepDream model

Dream-Creator This project aims to simplify the process of creating a custom DeepDream model by using pretrained GoogleNet models and custom image dat

Using / reproducing ACD from the paper "Hierarchical interpretations for neural network predictions" 🧠 (ICLR 2019)

Hierarchical neural-net interpretations (ACD) 🧠 Produces hierarchical interpretations for a single prediction made by a pytorch neural network. Offic

👋🦊 Xplique is a Python toolkit dedicated to explainability, currently based on Tensorflow.

👋🦊 Xplique is a Python toolkit dedicated to explainability, currently based on Tensorflow.

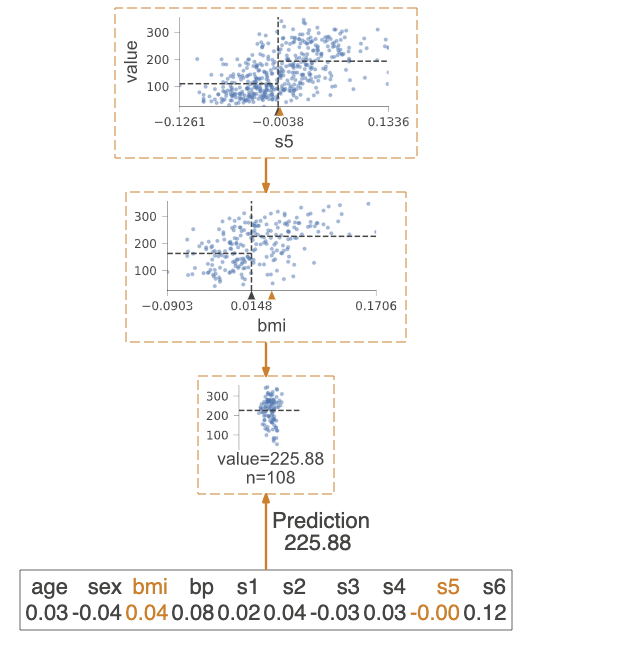

A python library for decision tree visualization and model interpretation.

dtreeviz : Decision Tree Visualization Description A python library for decision tree visualization and model interpretation. Currently supports sciki

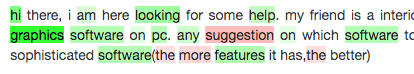

Lime: Explaining the predictions of any machine learning classifier

lime This project is about explaining what machine learning classifiers (or models) are doing. At the moment, we support explaining individual predict

Tool for visualizing attention in the Transformer model (BERT, GPT-2, Albert, XLNet, RoBERTa, CTRL, etc.)

Tool for visualizing attention in the Transformer model (BERT, GPT-2, Albert, XLNet, RoBERTa, CTRL, etc.)

Many Class Activation Map methods implemented in Pytorch for CNNs and Vision Transformers. Including Grad-CAM, Grad-CAM++, Score-CAM, Ablation-CAM and XGrad-CAM

Class Activation Map methods implemented in Pytorch pip install grad-cam ⭐ Comprehensive collection of Pixel Attribution methods for Computer Vision.

An intuitive library to add plotting functionality to scikit-learn objects.

Welcome to Scikit-plot Single line functions for detailed visualizations The quickest and easiest way to go from analysis... ...to this. Scikit-plot i

Algorithms for monitoring and explaining machine learning models

Alibi is an open source Python library aimed at machine learning model inspection and interpretation. The focus of the library is to provide high-qual

Visual analysis and diagnostic tools to facilitate machine learning model selection.

Yellowbrick Visual analysis and diagnostic tools to facilitate machine learning model selection. What is Yellowbrick? Yellowbrick is a suite of visual

A library for debugging/inspecting machine learning classifiers and explaining their predictions

ELI5 ELI5 is a Python package which helps to debug machine learning classifiers and explain their predictions. It provides support for the following m

python partial dependence plot toolbox

PDPbox python partial dependence plot toolbox Motivation This repository is inspired by ICEbox. The goal is to visualize the impact of certain feature

⬛ Python Individual Conditional Expectation Plot Toolbox

⬛ PyCEbox Python Individual Conditional Expectation Plot Toolbox A Python implementation of individual conditional expecation plots inspired by R's IC

A library that implements fairness-aware machine learning algorithms

Themis ML themis-ml is a Python library built on top of pandas and sklearnthat implements fairness-aware machine learning algorithms. Fairness-aware M

Code for visualizing the loss landscape of neural nets

Visualizing the Loss Landscape of Neural Nets This repository contains the PyTorch code for the paper Hao Li, Zheng Xu, Gavin Taylor, Christoph Studer

Interpretability and explainability of data and machine learning models

AI Explainability 360 (v0.2.1) The AI Explainability 360 toolkit is an open-source library that supports interpretability and explainability of datase

Visualization toolkit for neural networks in PyTorch! Demo -->

FlashTorch A Python visualization toolkit, built with PyTorch, for neural networks in PyTorch. Neural networks are often described as "black box". The